As published in “The Next Platform”

Don’t just call it “the cloud.” Even if you think you know what cloud means, the word is fraught with too many different interpretations for too many

people. Nevertheless, the effect of cloud computing, the web, and their assorted massive datacenters has had a profound impact on enterprise computing,

creating new application segments and consolidating IT resources into a smaller number of mega-players with tremendous buying power and influence.

Welcome to the hyperscale market.

At the top end of the market, ten companies – behemoths like Google, Amazon, eBay, and Alibaba – each spend over $1 billion per year on IT. These ten

companies alone account for approximately $20 billion per year in IT consumption. Beyond that top tier, hundreds of additional companies complete

the hyperscale landscape.

Intersect360 Research first identified this segment as “ultrascale internet” in 2007, classifying companies like Google as purchasers of high-performance

and scalable technologies as part of what would later be named High Performance Business Computing, a segment of High Performance Computing (HPC)

for non-scientific business applications. Google’s 2007 acquisition of a stream processing company called Peakstream was evidence of the trend.

After nearly ten years of evolution and growth, hyperscale has emerged as its own market, demanding analysis and forecasting distinct from both HPC

and general enterprise computing. Intersect360 Research has published its foundational methodology report, “The Hyperscale Market: Definition, Scope, and Market Dynamics,”

outlining the boundaries and structure of the hyperscale market.

The key in defining the hyperscale market is to identify organizations with web-facing application infrastructures that are arbitrarily scalable with

the internet. As stated in the report, “The hyperscale market is made up of organizations with core business processes that are based on accessing,

processing, and disseminating information through the internet. Primary workloads associated with hyperscale include: web search and categorization;

online retail and customer identification; content hosting and distribution; social media; communication services; cloud application services;

machine learning (artificial intelligence, cognitive computing, pattern matching); and massively multiplayer online games (MMOGs).” Google, Amazon,

and Alibaba are in the billion-plus Tier 1 echelon, but the second and third tiers contain hundreds of examples like Tencent, Yandex, and Netflix

that still fit the hyperscale mold.

“What Makes Hyperscale ‘Hyper’?”

Among the key questions addressed in the report, Intersect360 Research tackles the justification for treating hyperscale as a separate segment. The

paramount factor is that hyperscale infrastructure tends to be distinct from general enterprise infrastructure, with different purchasing criteria.

That is to say, the hardware, software, and personnel that drive the internet-facing applications – the search engines, ad servers, games, streaming

services, portals, etc. – are generally not shared with the hardware, software, and personnel that drive everyday business – the company’s website,

ERP systems, payroll databases, etc. (In the case of something like Gmail or Salesforce.com, it is possible that an organization may use its own

online services, essentially becoming its own customer; this is common in many industries.)

In this way, hyperscale is similar to HPC. HPC infrastructure also tends to be separate from general enterprise, and it is bought and managed differently.

(The same cannot be said of big data and analytics, where we find a great deal of sharing. It is commonplace for big data and analytics applications

to be run across infrastructure that is used for day-to-day operations; organizations often do not make separate acquisitions with respect to big

data.)

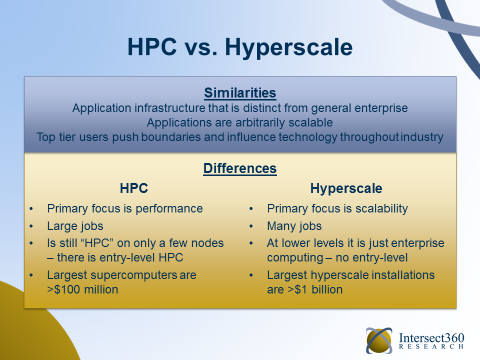

The report highlights the following similarities between hyperscale and HPC:

- High levels of performance and scalability are endemic. Both HPC and hyperscale are driven by the needs of applications to have

access to large amounts of computational power, which is accomplished via infrastructure scalability. In addition, the applications themselves

tend to be “arbitrarily scalable,” in that there is not a practical maximum to their scope. - Infrastructure is distinct from general enterprise computing. The hardware infrastructure for both HPC and hyperscale is different

than that in the enterprise setting, whose main drivers are reliability and reproducibility of results. - Use of open-source software. While open source requires the additional investment of software support and maintenance, those costs

can be amortized across a large hardware base, which makes it uniquely suitable for HPC and hyperscale computing environments. - Market tiering has a similar structure. The top tier of hyperscale is comprised of the large internet search and retail companies,

while in HPC it is comprised of the supercomputing national labs and research centers. Lower tiers are distributed across more applications

and users. In both HPC and hyperscale, top-tier customers often use their market position to deal directly with individual component providers,

and they have a great deal of leverage in negotiating procurement costs. - Technology innovation is generated in the top tier. The elite players in both segments push the boundaries and influence technology

direction with regard to architectures and standards for the rest of their respective communities.

These similarities, particularly the usage of high-performance technologies, are what drove Intersect360 Research to being counting “ultrascale internet”

as an HPC segment nearly ten years ago. However, the hyperscale market has now grown and evolved into a market on its own, with several key differences,

also outlined in the report:

- Capacity computing vs. capability computing. HPC is primarily dependent upon single-job–oriented capability computing, which is

driven by requirements to minimize time to completion for a given application. Hyperscale computing, on the other hand, is driven to a much

greater degree by multiple independent jobs (i.e. capacity requirements), originating from the need to gather, process, and disseminate information

over the internet. - Application profiles. For the most part, HPC applications run to completion in order to deliver a single result, while hyperscale

applications do not. They run continuously so as to process streams of data or tasks on a perpetual basis. - Data movement bound by internet speeds. As a result of dependence on internet connectivity, hyperscale applications have a requirement

for data movement both within and beyond the data center. - Processor architecture. Most HPC applications are highly dependent on top-end CPUs and GPUs, given that single-threaded performance

and maximum performance density is highly desirable. On the other hand, typical hyperscale applications are trivial to decompose into bite-sized

tasks and are thus naturally suited to a distributed, less computationally dense infrastructure. - Site budgets. Although the market tiers of hyperscale and HPC are similar in scope, there is an order of magnitude difference

in the size of the top budgets. Top tier hyperscale computing infrastructure budgets exceed $1 billion per year; the corresponding value for

top-tier supercomputing sites is over $100 million.

Similarities and Differences Between HPC and Hyperscale

From HPC Advisory Council, Stanford Workshop presentation, February 2016

Machine Learning: The HPC of Hyperscale

Like HPC, the hyperscale market drives technology forward, and this engenders new applications and new technologies. While the two markets are distinct,

they will have an ongoing influence on each other. Hyperscale users capitalize on scalable, high-performance technologies designed for HPC, and

HPC users are implementing software first deployed for hyperscale. One notable example in the software spectrum is OpenStack, now in use at many

HPC sites, including at the University of Cambridge in support of the Square Kilometer Array project. Over time, new parallel programming languages

such as Go (created by Google) and Swift (Apple) could migrate into HPC usage.

But well beyond the mere technology level, the greatest near-term influence of hyperscale on HPC will be in a new paradigm of computing: machine learning.

Spanning related terms like “cognitive computing” and “deep learning,” this branch of artificial intelligence has grown out of hyperscale organizations’

drive toward superiority in tasks like pattern matching and speech and image recognition, with long-range goals including autonomous vehicles and

virtual physicians.

To date, the most important research in this space has been undertaken by Tier 1 and Tier 2 hyperscale players, including Google, Facebook, Microsoft,

Amazon, Apple, Baidu, and IBM. (IBM mostly deserves credit here as a technology provider, for its “Watson” cognitive-computing platform, although

IBM is in itself a second-tier hyperscale organization, particularly in its SoftLayer cloud business.) For the time being, Intersect360 Research

is tracking machine learning as part of its hyperscale advisory service, though there are signs that the concept is beginning to take off in HPC,

particularly in financial services. (Many financial services companies, such as Paypal, can be counted as both HPC and hyperscale users, though

the infrastructures for these are usually distinct. Machine learning in these cases is predominantly associated with the hyperscale applications.)

The importance of machine learning is not only that is represents a potential breakthrough in computer science, but also that it is a special case

in the market: an HPC-style application profile inside hyperscale application sets. For machine learning application infrastructures, hyperscale

organizations will often employ high-performance technologies and architectures, such as faster processors, lower-latency networks, or accelerated

computing. This represents a small minority of the total spending inside of a Tier 1 hyperscale deployment, but is an indicator of how machine

learning could eventually have a profound effect across both HPC and hyperscale applications.

Hyperscaling into the Future

In its conclusions, the Intersect360 Research report examines the market drivers and barriers for hyperscale into the future. Notably, the barriers

are sparse. By its nature, the hyperscale market grows with connectivity to the Internet, including concepts like mobile, cloud, and Internet of

Things (IoT). While there is indeed a theoretical upper limit to how many people and things can be connected (and perhaps more notably, an upper

bound to humans’ ability to pay attention), we are not within practical reach of that limit in the near term.

The report concludes:

- As of 2016, hyperscale spending is on the order of tens of billions of dollars per year, with a market profile that makes it resistant to economic

downturns in any particular economic sector. - Its end user community is bounded only by access to the internet.

- Hyperscale computing is well-positioned to grow in concert with related rapidly evolving trends such as big data analytics, utility cloud computing,

social media, mobile computing, and the internet of things (IoT). - It encompasses a number of greenfield opportunities, including machine learning, virtual reality and smart city initiatives.

- It provides a framework that can subsume significant portions of other IT segments, such as enterprise and desktop computing.

- It is an important source of hardware and software innovation that is relevant to the entire information technology sector.

Beyond the super-billion top tier, the report says, “there are hundreds of organizations that are conducting business at hyperscale levels, utilizing

technologies and making investments in a way that is unique from their counterparts in HPC and enterprise computing.”

Intersect360 Research began tracking the trend almost a decade ago, and now hyperscale is its own market, with dramatic influence over HPC and the

entire enterprise computing space. It affects suppliers, programmers, administrators, and anyone running an application over a multi-node cluster

or off of a desktop into the cloud. From an industry analysis perspective, it’s a whole new market.